AI-assisted facial recognition and tagging is becoming increasingly common inside Digital Asset Management (DAM) platforms. In the past year, several major DAM providers, including Pics.io, MediaValet, and PhotoShelter have released face tagging as a feature. For teams who possess event photos, automated face detection and tagging has a compelling value proposition: faster identification of guests, shorter turnaround times for marketing and outreach, as well as dramatically reduced manual workloads for labeling metadata.

However, what appears to be a simple workflow optimization is, in fact, a shift into biometric processing. That shift carries consequences that most DAM platforms and users have not fully internalized.

The regulatory landscape governing biometric identifiers has matured rapidly over the past decade. Enforcement is no longer theoretical, and the distinction between owning a photograph and processing the biometric information contained within it is now well established in statutory text, regulatory guidance, and case law. For organizations tagging guests in large volumes of event photos, the lack of an auditable consent architecture is becoming increasingly indefensible.

Why Tagging Photos Needs Biometric Consent

Face tagging within a DAM platform involves more than attaching metadata to a file. The technical process typically includes detecting a face within an image, extracting geometric features, converting those features into a mathematical representation often referred to as a template or embedding, and comparing that template across a database to identify recurring individuals.

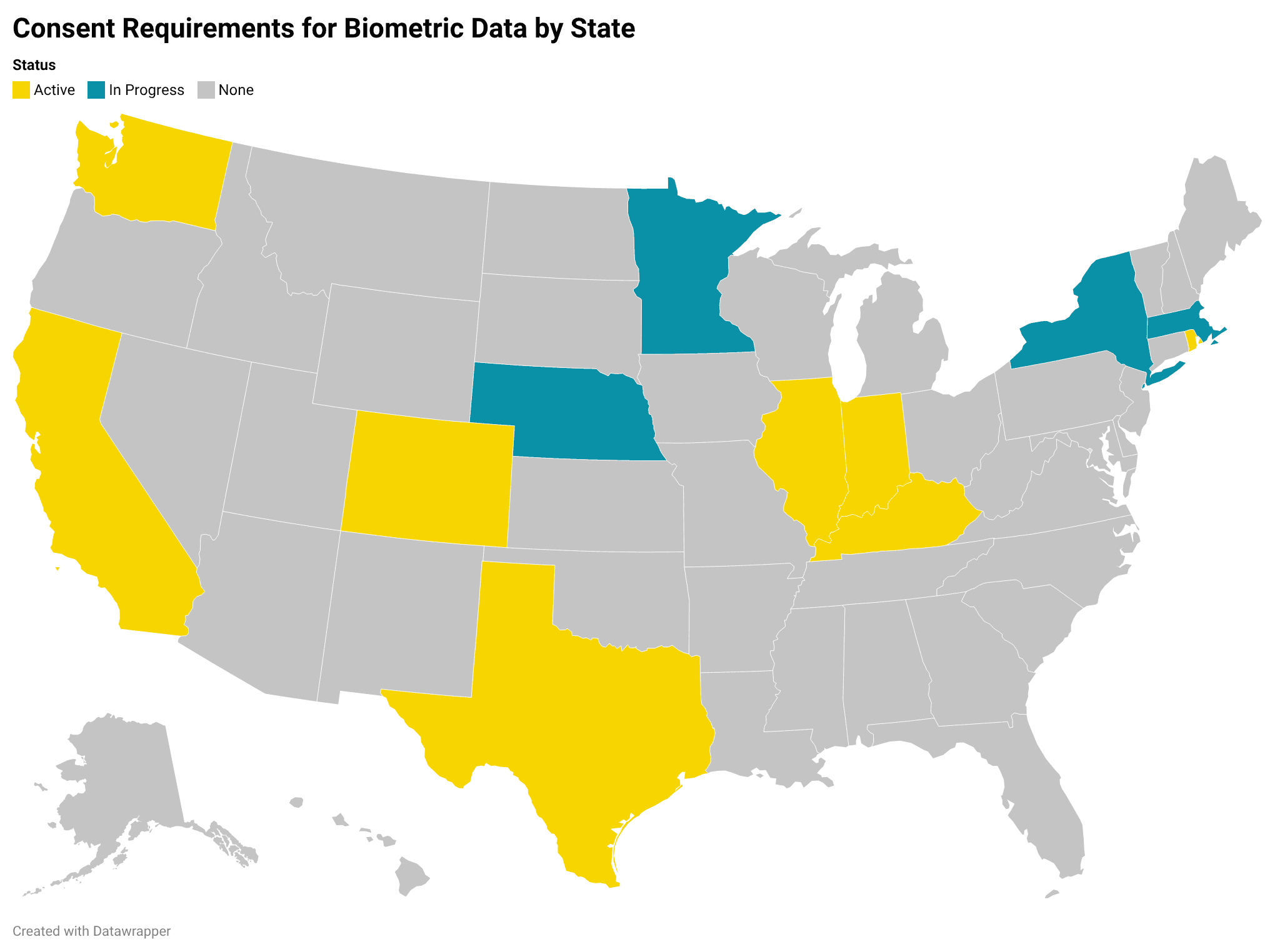

Regulators and courts have made it clear that this mathematical representation, not the photograph itself, is the regulated object and requires express consent. As of 2026, eight states have laws in place that require documented consent for biometric processing, while four more have pending legislation (Figure 1).

Perhaps the most well-known regulation is the Illinois Biometric Information Privacy Act (BIPA), which states that a private entity that collects, captures, or otherwise obtains a biometric identifier must first provide notice and obtain written consent. The statute does not condition its applicability on whether the data is sold, shared publicly, or used for surveillance. The act of capture and storage is sufficient to trigger its obligations.

In Texas, the Capture or Use of Biometric Identifier (CUBI) Act similarly prohibits the capture of a biometric identifier for a commercial purpose without informed consent. Enforcement actions by the Texas Attorney General have demonstrated that “commercial purpose” is interpreted broadly, encompassing operational uses within a company’s core services.

The states of Washington, Iowa, and California have similar provisions under RCW 19.375, ICDPA, and CCPA, respectively, while Indiana, Kentucky, and Rhode Island have passed new regulations enforcing the requirements for opt-in and opt-out consents in early 2026. At the municipal level, cities like New York City and Portland, OR have also enacted laws pertaining to consent requirements for biometric data.

Within the European Union and the United Kingdom, the General Data Protection Regulation (GDPR) classifies biometric data used for uniquely identifying a natural person as special category data under Article 9. Processing such data is prohibited unless a specific exception applies, the most common for private organizations being explicit consent. Guidance from the UK Information Commissioner’s Office (ICO), confirms that identification involving comparison against a database constitutes regulated biometric processing.

For DAM platforms and their users, the implication is straightforward: when a system extracts facial geometry and enables 1:N identification across a library, it is no longer merely organizing images. It is maintaining a biometric database.

Photo Ownership Does Not Confer Consent

A common misconception is that ownership of the photograph grants the right to process everything within it. From a copyright perspective, that assumption may be defensible. From a biometric consent perspective, it is not.

Courts interpreting BIPA have rejected arguments that information derived from photographs is exempt simply because the underlying image is not itself a biometric identifier. The mathematical scan of facial geometry derived from that image falls squarely within the statutory definition. In other words, an organization may own the digital asset while the individual retains statutory rights over the biometric information extracted from it. A DAM system that treats facial templates as ordinary metadata fails to reflect the layered rights structure that modern privacy law imposes, and leaves its user open to litigation.

The Operational Risk of Consentless Tagging

From an operational perspective, there is friction associated with obtaining and documenting consent, especially for teams who have already tagged hundreds to thousands of people. From a compliance angle, the exposure associated with failing to do so is disproportionate.

BIPA allows for the right of private action, with statutory damages of $1,000 per negligent violation and $5,000 per reckless or intentional violation, without requiring proof of actual harm. Texas law authorizes civil penalties of up to $25,000 per violation through Attorney General enforcement. These figures are not purely theoretical; large-scale settlements in the biometric context have reached into the hundreds of millions and, in Texas, into the billions (see cases on Meta, Google).

Recently, BIPA was amended to limit the accrual of repeated violations for the same individual. Notwithstanding, the baseline exposure remains substantial when multiplied across thousands of event attendees. Moreover, biometric statutes and comprehensive privacy laws frequently impose independent obligations regarding retention schedules, data destruction, and transparency. An organization that continues to store templates after the purpose for collection has expired may face liability even in the absence of a data breach.

The operational gain of skipping a consent intake workflow is modest, while the downside risk is structural, with the ability to severely impact balance sheets, insurance coverage, procurement decisions, and reputational standing.

Why an Auditable Consent Portal Is a Governance Requirement

If face tagging constitutes biometric processing, the question for DAM users is not whether to implement consent, but how to implement it in a manner that is demonstrable, enforceable, and defensible. An auditable consent portal performs several essential functions:

First, it binds consent to the specific biometric template. Consent must be informed, documented, and linked to the individual. In practice, this means that each template should be associated with a time-stamped consent record, a defined purpose of processing, and any applicable jurisdictional constraints.

Second, it separates the act of detection and clustering from that of labeling. A well-architected system can allow facial clustering to operate at a similarity level while preventing the assignment of a real-world name unless a valid consent record exists. This separation between clustering and identity enrollment preserves much of the findability value that marketing and communications teams rely upon, while materially reducing the risk associated with unauthorized identification.

Third, it operationalizes revocation and retention. Consent under GDPR must be as easy to withdraw as it is to give. Biometric statutes in the United States require destruction once the initial purpose has been satisfied. An auditable portal must therefore trigger automatic removal of identity labels and, where required, deletion of associated templates when consent is withdrawn or expires. Manual processes are rarely sufficient at scale.

Fourth, it creates an evidentiary record. In the event of regulatory inquiry or litigation, the organization must be able to demonstrate when a template was created, under what authority, how long it was retained, and when it was deleted. Absent detailed logs and system-enforced controls, such representations are difficult to substantiate.

For procurement teams evaluating DAM vendors, the absence of these capabilities should be viewed as a material compliance gap rather than a feature omission.

Portraiteer as Consent-Centric Infrastructure

Portraiteer’s architecture is designed around the premise that biometric processing in event photography must be consent-governed from inception.

Rather than treating consent as an external form stored in a CRM or ticketing platform, Portraiteer integrates consent capture and template management. Clustering can occur without immediate identity enrollment. Name assignment is conditioned on the presence of a valid consent record. Revocation triggers system-level enforcement. Audit logs document each stage of the lifecycle.

This approach aligns operational efficiency with statutory requirements. Marketing teams retain rapid access to relevant images. Development teams can locate stakeholder appearances. Event organizers can provide curated galleries. At the same time, the system produces a defensible record demonstrating that biometric identifiers were processed pursuant to explicit authorization and in accordance with defined retention policies.

Compliance for the Next Generation of DAM

AI-enabled face tagging is not inherently unlawful, nor is it incompatible with teams hoping to use it to optimize event photo workflows. The critical issue is governance design.

A DAM platform that performs biometric identification without an auditable consent layer effectively converts a media library into an unmanaged biometric database. Conversely, a platform such as Portraiteer's that integrates consent binding, revocation controls, retention automation, and audit logging transforms biometric processing into a controlled, transparent function aligned with modern privacy expectations.

For organizations operating in multiple jurisdictions and managing thousands of event images annually, that distinction is no longer theoretical. It is central to operational resilience, insurability, and long-term solvency.

In the current enforcement climate, the defensible position is not that face tagging is internal, convenient, or industry-standard. The defensible position is that it is lawful, consent-based, and demonstrably governed.